Shawn Hogan and Brian Dunning didn’t break eBay’s affiliate system. They used it exactly as designed, which is the more important point.

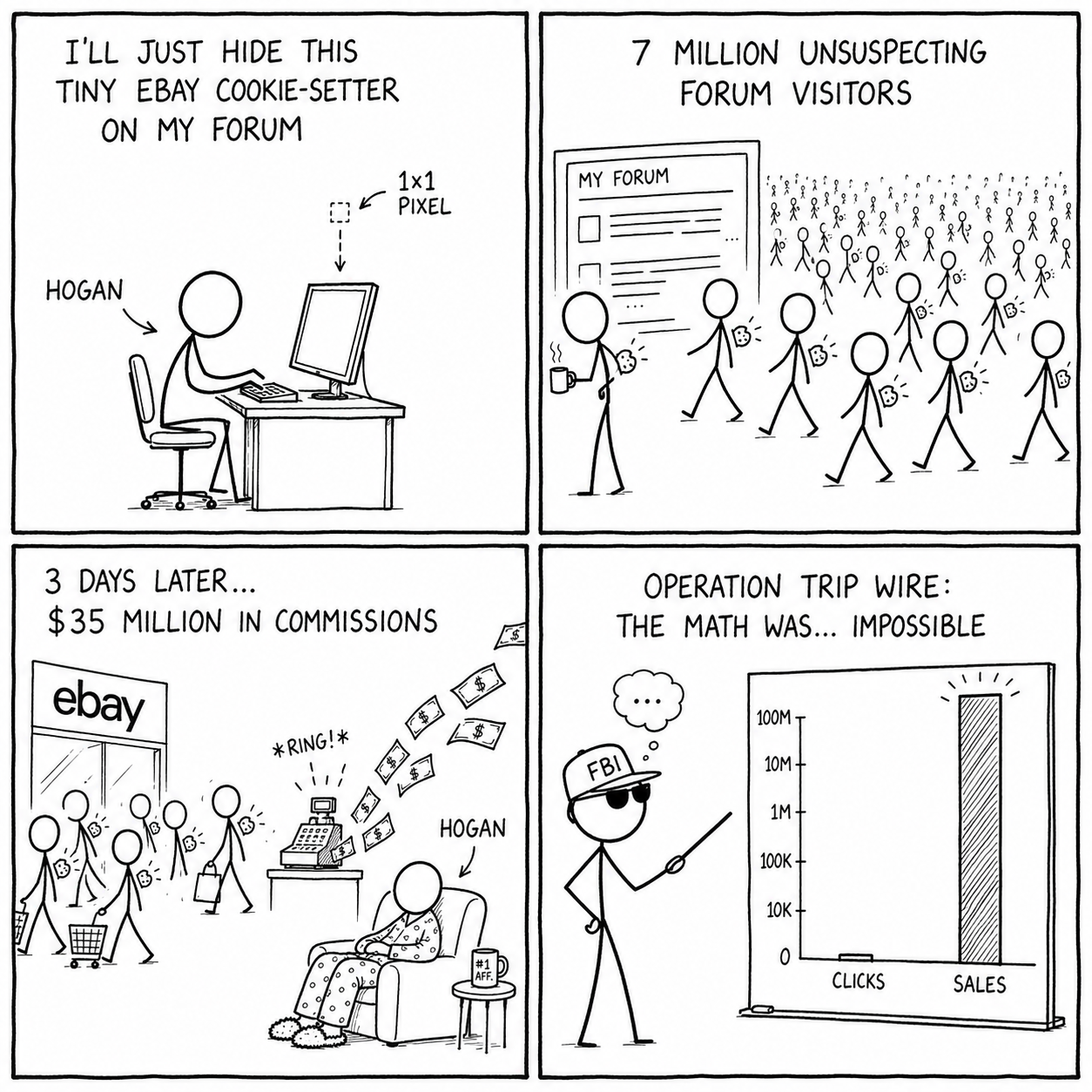

Between roughly 2006 and 2010, the two collected a combined $35M in affiliate commissions from eBay by planting tracking cookies on millions of unsuspecting browsers without a single user clicking anything. The mechanism was a 1×1 <img> tag. The vulnerability was a conceptual mismatch baked into every affiliate program of that era: cookies are set on HTTP requests, not on intent.

This is not a story about a clever hack. It’s a story about an attribution system whose designers never asked “what happens if someone lies to it?” and what the correct engineering answer looks like when you do.

The HTTP Primitive at the Center of Everything

To understand the exploit you need to understand one thing precisely:

A browser executing <img src="https://rover.ebay.com/rover/1/...?campid=AFFILIATE_ID"> fires an unconditional GET request to that URL. The server at that URL cannot determine, from the request alone, whether a human deliberately clicked a link, whether any human was involved at all, or whether the request came from a JavaScript loop running 50,000 times a second. HTTP carries none of that context.

eBay’s affiliate endpoint responded to any GET with:

HTTP/1.1 200 OK

Set-Cookie: affiliate=HOGAN; Domain=.ebay.com; Max-Age=604800

Content-Type: image/gif

Content-Length: 43

[1×1 transparent GIF bytes]The cookie is set. The attribution chain is established. The user never saw a link.

This is the foundational asymmetry: the server can record that a request happened, but not whether the request represents a human decision. Any system that conflates “a request arrived” with “a user clicked” has a hole. In 2006, every major affiliate program had this hole, and most treated cookies as ground truth.

The Exploit, Layer by Layer

Baseline: The Naked Stuffer

The minimum viable implementation is exactly what you’d write in five minutes:

<img

src="https://rover.ebay.com/rover/1/711-53200-19255-0/1?campid=5337337337"

width="1"

height="1"

style="position:absolute;left:-9999px;top:-9999px;"

/>Embed this in any page with real traffic. Every visitor gets Hogan’s affiliate cookie. When they organically buy something on eBay days later, because they were already going to buy it, Hogan collects the commission. This is pure fraud. It’s also operationally stupid in a specific way: the Referer header on every request points directly at hogans-forum.com.

Layer 1: Referrer Laundering via Redirect Chains

A moderately paranoid stuffer routes the pixel request through redirect chains designed to strip the Referer header before it reaches the affiliate endpoint:

hogans-forum.com

│ (hidden <img> loads)

▼

cdn-assets-prod.example.net/img.gif

│ (302 redirect, strips Referer)

▼

static-content-3.example.org/track.gif

│ (302 redirect)

▼

analytics-pixel.example.io/p.gif

│ (302 redirect)

▼

rover.ebay.com/?campid=...Each intermediate hop is configured to prevent Referer propagation:

location /img.gif {

proxy_hide_header Referer;

add_header Referrer-Policy "no-referrer";

return 302 https://next-hop.example.org/track.gif;

}When eBay’s fraud team inspects the traffic, they see affiliate requests arriving from analytics-pixel.example.io, a domain with no obvious ownership connection to Hogan. Tracing that domain back requires a warrant. Noticing it’s anomalous requires someone running the right query.

Layer 2: Behavioral Filters to Reduce Statistical Signatures

A sophisticated operator adds client-side logic to avoid the statistical footprints that fraud detection looks for:

window.addEventListener('load', () => {

// Frequency cap: stuff each user at most once per day

if (document.cookie.includes('_stf=1')) return;

document.cookie = '_stf=1; max-age=86400; path=/';

// Delay fire: instant pixel load on page load is a bot signal

setTimeout(() => {

fetch('https://geo.example.com/whoami')

.then(r => r.json())

.then(({ country }) => {

// High-payout geos only: US, UK, CA, AU

if (!['US', 'UK', 'CA', 'AU'].includes(country)) return;

const px = new Image();

// Cache-bust forces the browser to actually fire the request

px.src = `https://cdn-assets-prod.example.net/img.gif?t=${Date.now()}`;

});

}, 4000);

});This addresses several detection vectors simultaneously: it avoids duplicate cookies that would skew conversion ratios, geo-filters to avoid low-value traffic that might dilute payout-to-cost math, delays the fire to avoid the “50 simultaneous resource loads” bot signature, and cache-busts to prevent browser caching from silently dropping the request.

The geo-filter is particularly instructive: the operator is trading reduced reach for cleaner per-affiliate statistics. The signal-to-noise tradeoff is deliberate.

Layer 3: Stuffing-as-a-Service

Hogan and Dunning were sophisticated relative to the era, but they weren’t the only players. SauceKit, sold for ~$450/month at its peak, was a turnkey service that generated rotated, redirect-chained pixel URLs on behalf of subscribers. Operators pasted one line into their site. The kit handled everything: redirect chains, frequency capping, geo-filtering, rotation across multiple affiliate IDs so no single ID accumulated anomalous numbers.

This is worth noting from a systems perspective: the fraud scaled by becoming a platform. The operators of SauceKit centralized the complexity and distributed the risk. Christopher Kennedy, who ran it, got six months and ~$400K in restitution when it collapsed.

The Detection Failure and What Caught Them

The crime was invisible to individual users. The signal existed entirely in eBay’s own database.

A legitimate high-performing affiliate has a funnel that looks roughly like this:

Impressions: 1,000,000

Clicks: 20,000 (2.0% CTR)

Sales: 400 (2.0% CVR on clicks)

Commission: $1,200Hogan’s funnel in eBay’s billing system looked like this:

Impressions: 1,000,000

Clicks: 50 (0.005% CTR)

Sales: 8,000 (16,000% CVR, impossible)

Commission: $32,000The conversion rate exceeded the number of recorded clicks by several orders of magnitude. People were completing purchases against an affiliate tag that had no corresponding click event in the attribution log. In a mathematically coherent system, that’s a schema constraint violation. In eBay’s 2006 system, it was a line in the commission report.

The query that exposed it was trivial:

SELECT

affiliate_id,

SUM(sales) AS total_sales,

SUM(clicks) AS total_clicks,

SUM(sales) * 1.0 /

NULLIF(SUM(clicks), 0) AS cvr

FROM affiliate_metrics

WHERE period = '2006-Q3'

GROUP BY affiliate_id

ORDER BY cvr DESC

LIMIT 50;The top results had CVRs in the hundreds or thousands of percent. Anyone who ran this query would have seen it immediately. Nobody ran it for years.

Once eBay flagged the outliers and brought in the FBI’s “Operation Trip Wire,” the technical evidence was straightforward: federal agents visited Hogan’s sites with instrumented browsers, captured every HTTP request, and watched eBay affiliate cookies appear in their cookie jars without any interaction. That’s a packet capture of a federal crime in progress. Subpoenas tied the redirect-chain domains back to Hogan through domain registration records.

The charges were filed under 18 U.S.C. § 1343: wire fraud. Prosecutors successfully argued that causing a server to set a cookie under false pretenses constitutes a fraudulent transmission across interstate wires. Hogan pleaded guilty, served five months, paid a $25,000 fine. Dunning got fifteen months.

Note: The wire fraud precedent from this case is why every subsequent cookie stuffing prosecution (and the current PayPal Honey class actions) uses the same statute. Causing a server to record a fraudulent attribution event is the wire transmission. The

<img>tag is the instrument.

How This Applies to Distributed Systems

Cookie stuffing is a narrow domain, but the underlying failure modes are general. Every distributed system that records events for billing, compensation, or resource allocation faces the same class of problem: an external actor can generate events that look legitimate but aren’t.

The Core Invariant: Events Must Be Verified, Not Trusted

eBay’s system treated the presence of a cookie as proof of a click. It wasn’t. The cookie was a claim, and the system had no mechanism to verify whether the claim matched a server-recorded event.

This is the same failure mode as:

- Accepting a JWT and paying a commission without verifying the signing key against your own keystore

- Crediting a payment based on a webhook without verifying the HMAC signature

- Releasing a resource based on a client-supplied token without checking whether your server issued that token

The correct invariant in every case: client-supplied credentials are claims that require server-side corroboration. A cookie, a token, a webhook event; none of these prove anything on their own.

Idempotency and Event Deduplication

The stuffer’s frequency cap (the _stf=1 cookie) is a client-side approximation of idempotency. It fails as a security control because the client controls it; you can clear cookies and get re-stuffed. But the underlying need is real: in any event-driven billing system, the same causal event must not result in multiple commission payments.

The server-side version of this is a deduplication store:

// Server-side click registration with idempotency guarantee

func RegisterClick(ctx context.Context, clickID, affiliateID, sessionID string) error {

key := fmt.Sprintf("click:%s", clickID)

// NX = only set if not exists; expiry = commission window

set, err := rdb.SetNX(ctx, key, affiliateID, 7*24*time.Hour).Result()

if err != nil {

return fmt.Errorf("dedup store unavailable: %w", err)

}

if !set {

// Click ID already registered; idempotent, not an error

return nil

}

// Write to durable store only after dedup check passes

return db.InsertClick(ctx, clickID, affiliateID, sessionID, time.Now())

}Note what this requires: the click ID must be server-issued, not client-supplied. If affiliates can generate their own click IDs, they can generate clicks. The dedup store only works if the token namespace is controlled by the system being protected.

Retries and the “At Least Once” Problem

Server-to-server postback systems (the modern replacement for cookies) are typically implemented as HTTP callbacks from the merchant to the affiliate’s tracking endpoint:

- Conversion happens

- Merchant fires POST /conversion { click_id, order_id, amount }

- 2xx: success, done

- 5xx: retry with exponential backoff

- timeout: retry with exponential backoff

The retry logic creates a subtle problem: if the affiliate’s endpoint processes the postback but returns a 5xx before the merchant’s system records the acknowledgment, the merchant retries, and the affiliate potentially records two commissions for one conversion.

The fix is standard idempotency: the merchant sends a stable conversion ID, the affiliate’s endpoint upserts on that ID, and the merchant records the final acknowledged state:

func HandleConversionPostback(w http.ResponseWriter, r *http.Request) {

var payload ConversionPayload

if err := json.NewDecoder(r.Body).Decode(&payload); err != nil {

http.Error(w, "bad request", http.StatusBadRequest)

return

}

// Upsert: idempotent by conversion_id

if err := db.UpsertConversion(r.Context(), payload.ConversionID, payload); err != nil {

// Return 5xx so merchant retries, but our upsert ensures dedup

http.Error(w, "internal error", http.StatusInternalServerError)

return

}

w.WriteHeader(http.StatusOK)

}The pattern: accept retries, guarantee idempotency, acknowledge only after durable write.

Observability: The Query Nobody Ran

eBay’s database contained the evidence of the fraud from the first stuffed cookie. The problem wasn’t missing data; it was missing instrumentation. Nobody was continuously computing sales / clicks per affiliate and alerting on outliers.

In a production fraud detection system, this metric runs continuously. The implementation is less interesting than the discipline: any system that pays out money based on recorded events must have a continuous, automated reconciliation process that flags statistical impossibilities.

In practice this means:

type AffiliateMetrics struct {

AffiliateID string

Clicks int64

Sales int64

CVR float64

FlaggedAt *time.Time

}

func (m *AffiliateMetrics) IsStatisticallyAnomalous() bool {

// CVR above 0.5 (50%) is physically impossible for legitimate affiliates

// at scale; organic traffic converts at 1-5%

if m.Clicks > 100 && m.CVR > 0.50 {

return true

}

// Essentially zero clicks with non-zero sales

if m.Sales > 50 && m.Clicks < 5 {

return true

}

return false

}The thresholds are a business decision. The enforcement mechanism is not: flagged affiliates must have commissions withheld pending review, not paid and clawed back later. Claw-backs are expensive, contentious, and often legally complex. Prevention is cheaper.

Concurrency Control: When Two Stuffs Race

In a high-traffic environment, two simultaneous page loads from the same user can both fire the stuffing pixel before either sets the frequency-cap cookie. This is a standard TOCTOU (time-of-check to time-of-use) race condition. The client-side frequency cap doesn’t prevent it; only a server-side atomic check does.

This generalizes: client-side rate limiting and deduplication are always advisory, never authoritative. Any security or financial control that depends on the client behaving correctly is not a control.

The Modern Defense Stack

The 2006 eBay system had one implicit trust assumption: the cookie tells the truth. Modern affiliate systems have replaced that with a defense-in-depth stack where each layer is independently verifiable.

Server-Issued, One-Time Click Tokens

Instead of setting a cookie at the affiliate endpoint, the merchant issues a server-side click token at the moment the user arrives from an affiliate link:

- User clicks https://merchant.com/?campid=AFFILIATE_ID

- Merchant backend: generate click_id = UUID()

- Log to database: { click_id, affiliate_id, ip, user_agent, referer, timestamp }

- Set-Cookie: click_id=<click_id>; HttpOnly; Secure; SameSite=Lax

- At checkout: look up click_id in database, pay only if record exists

The critical property: click_id only exists if the merchant’s server created it. An affiliate cannot manufacture a click_id by loading a hidden image. The token namespace is controlled by the system being protected.

SameSite and the Death of the Pixel Attack

The SameSite=Lax attribute on a cookie means the browser will not send it on cross-site subresource requests, which includes <img>, <iframe>, and background fetch() calls. More relevant for offensive cookie setting: browsers implementing Lax-by-default (Chrome 80+, Safari ITP) will not set cookies delivered in response to cross-site subresource requests.

Set-Cookie: aff_click=abc123; SameSite=Lax; Secure; HttpOnlyCombined with Safari’s Intelligent Tracking Prevention partitioning third-party storage and Firefox’s Enhanced Tracking Protection, the classic pixel stuffer’s <img> tag cannot set a usable affiliate cookie in any major browser as of 2024. The browser no longer cooperates with the attack.

This is the single most impactful defense, and it required no changes to affiliate program logic. It’s a platform-level fix that made an entire attack class obsolete.

Server-to-Server Postbacks: Removing the Browser From the Loop

The most robust architecture skips browser cookies entirely for conversion attribution:

- User arrives via affiliate link

- Merchant logs click_id ↔ session server-side

- User completes purchase

- Merchant fires authenticated POST to affiliate tracking endpoint

- Affiliate verifies signature, records conversion

- Commission calculated and eventually paid

There is no browser component in the conversion attribution step. The affiliate cannot inject a fraudulent conversion because they don’t control the merchant’s checkout system or the HMAC signing key.

The tradeoff: this requires a pre-established trust relationship (shared secret or mutual TLS), a reliable callback URL from the affiliate, and retry/deduplication logic on both sides. It’s operationally heavier than cookie-based attribution. It’s also the only approach that’s provably correct in the presence of an adversarial affiliate.

Continuous Statistical Fraud Detection

The query that caught Hogan runs continuously in any serious modern system. The metrics are richer:

Click-to-conversion ratio: The primary Hogan signal. CVR above ~30% on any affiliate with more than a few hundred attributed sales is suspicious. CVR above 100% of clicks is definitionally impossible and should trigger automatic hold.

Conversion latency distribution: Real users who click affiliate links and buy typically convert within minutes (high intent) or days (browse and return). Cookie stuffing victims have a uniform-random latency distribution because the “click” occurred at an arbitrary time unrelated to purchase intent. A KS test comparing an affiliate’s latency distribution to the population baseline is a strong signal.

Click velocity distribution: Human click patterns are bursty and correlated with time-of-day and day-of-week. Bot-driven or scripted pixel fires are often suspiciously uniform, or suspiciously concentrated at off-peak hours when monitoring is lighter.

IP and User-Agent diversity: A single content site’s traffic should have geographic and device diversity proportional to its audience. An affiliate claiming 50,000 clicks from a forum that Alexa ranks at #2,000,000 is implausible.

Cookie-set-to-click-log ratio: In a server-token system, this ratio must be 1:1. Any commission event that lacks a corresponding server-side click log entry is unpayable.

Production Failure Case: The Honey Variant

The PayPal Honey browser extension lawsuits (filed 2024 to 2025) allege a structurally similar scheme adapted for the hardened post-SameSite environment.

The alleged mechanism: Honey installs as a browser extension, which gives it elevated permissions compared to a webpage. When a user is on a merchant’s checkout page, Honey reportedly replaces the existing affiliate cookie with its own affiliate ID, not by loading a hidden pixel, but from inside the user’s browser, on the merchant’s own domain.

This bypasses SameSite entirely: the extension runs in the same origin context as the merchant page. It bypasses server-token systems: the token was legitimately issued to a real affiliate who drove the traffic; Honey just replaces the attribution claim at the last moment. And it bypasses statistical detection: Honey’s CVR is plausibly high because it only fires on users who are literally in the middle of checking out.

The same wire fraud statute applies; the allegation is that causing the merchant’s server to record a fraudulent attribution at checkout is itself a fraudulent wire transmission, regardless of the technical mechanism.

The engineering lesson: defenses must be re-evaluated whenever the trust boundary shifts. The defenses built after Hogan assumed the browser was the hostile surface. Honey moved the attack to inside the browser, where it has user-granted permissions. The defense now needs to move server-side: merchants need server-to-server postbacks that confirm attribution at the click level, not the cookie level.

Implementation Patterns

1. Verified Click Registration

The key invariant: the cookie stores a pointer to a server record, not a self-contained credential. If the server record doesn’t exist, there’s nothing to pay against.

on GET /redirect?campid=AFFILIATE_ID:

click_id = generate_uuid()

db.insert({ click_id, affiliate_id, ip, user_agent, referer, timestamp })

set_cookie("click_id", click_id, samesite=Lax, httponly, secure)

redirect to merchant

on checkout conversion:

click_id = read_cookie("click_id")

click = db.lookup(click_id)

if click is null → no payout

if now - click.timestamp > 30d → no payout

if conversion_id already in dedup_store → no payout

dedup_store.set(conversion_id, ttl=30d)

pay(click.affiliate_id, amount)The dedup store (Redis SET NX or a unique constraint on conversion_id) prevents the same checkout event from paying twice, critical when the postback layer retries on 5xx.

2. Anomaly Detection

Run continuously against a rolling window (hourly or per billing cycle). Flag, don’t pay:

for each affiliate in window:

cvr = sales / max(clicks, 1)

if cvr > 0.30 and sales > 50:

flag(IMPOSSIBLE_CVR)

if sales > 20 and clicks < 5:

flag(ZERO_CLICK_SALES)

latencies = [sale_time - click_time for each sale]

if coefficient_of_variation(latencies) < 0.10:

flag(UNIFORM_LATENCY)

if max(latencies) - min(latencies) < 5min:

flag(SUSPICIOUS_BURST)The latency distribution check is the strongest signal for cookie stuffing specifically. Legitimate affiliate traffic has high latency variance: some users click and buy immediately, others return days later. Stuffing victims are unrelated to the “click” event, so their purchase timing is random relative to it, producing a uniform distribution rather than the bimodal one you see in real traffic.

3. Server-to-Server Postback

Remove the browser from conversion attribution entirely. The merchant fires an authenticated callback at checkout; no cookie is involved in the attribution decision.

# Merchant side: fires at checkout

payload = { click_id, conversion_id, order_id, amount, timestamp }

sig = HMAC-SHA256(payload, shared_secret)

POST affiliate.example.com/conversion

X-Merchant-Signature: sig

X-Idempotency-Key: conversion_id

body: payload

retry on 5xx: exponential backoff, cap at 30s, max 5 attempts

do not retry on 4xx: client error, log and alert

# Affiliate side: receives the callback

verify HMAC-SHA256(body, shared_secret) == X-Merchant-Signature

if invalid → reject 401

upsert conversion by conversion_id

return 200The HMAC signature means the affiliate cannot fabricate a conversion event; they’d need the merchant’s signing key. The idempotency key on the affiliate’s upsert means the merchant can safely retry without double-counting.

Failure Mode Summary

| Attack Vector | eBay 2006 | Modern Hardened System |

|---|---|---|

| Hidden img pixel | Sets cookie, earns commission | SameSite blocks cross-site cookie set |

| Redirect chain | Hides origin | Click token requires server log entry |

| High-volume stuffing | Undetected for years | Statistical anomaly detection flags |

| Browser extension | N/A | Requires S2S postback to override |

| Click ID forgery | N/A | IDs are server-issued UUIDs |

| Replay attack | Possible | Idempotency key + dedup store |

The Takeaway

Hogan’s mistake wasn’t leaving statistical fingerprints in eBay’s database. The fingerprints were unavoidable; that’s what committing fraud at scale does. His mistake was assuming nobody would look.

Attribution systems that pay money are security systems. Every system that allocates resources or revenue based on recorded events must be designed with the assumption that some actors will try to lie to it, and that the lies will be caught not by preventing the input, but by continuous reconciliation of the recorded state against what’s statistically possible.

The correct design principles, restated in engineering terms:

Credentials are claims, not proofs. Every client-supplied token must be corroborated by a server-side record that the client cannot have manufactured.

Statistical impossibility is a hard stop. A CVR above 100% of recorded clicks is not a rounding error. It’s a schema violation. The system should refuse to pay it, not flag it for later review.

The browser is a hostile runtime. Any security or financial control that depends on browser behavior (cookies, JavaScript, extensions) must be assumed to be bypassable. Put the control server-side.

Continuous reconciliation is not optional. The evidence of Hogan’s fraud existed in eBay’s database from day one. The failure was the absence of a process that looked at it.

The arms race continues. When SameSite killed the pixel attack, Honey moved into the browser extension layer. When S2S postbacks close that vector, the attack will move somewhere else. The query that exposes it will probably still be a variant of SELECT sales / clicks FROM affiliate_metrics ORDER BY ratio DESC.

Run it continuously. Alert on the outliers. Don’t wait for the FBI.